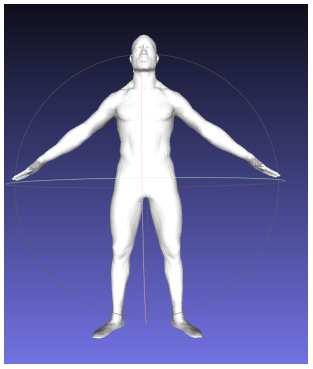

In the iToBoS project, the so-called 3D cameras are used. These cameras enable us to make a virtual representation of the patient. This can help the dermatologists to match dermoscopic images of lesions with the lesions on the body of the patient.

Example for a virtual patient

There are multiple underlying physical principles that can be used to acquire 3D information about the human surface.

Stereo-vision for 3D mapping

The closest principle to a natural human vision is stereovision. Stereovision systems typically consist of two cameras horizontally offset from each other, thus capturing two different views of a scene, similar to the human binocular vision using our two eyes. Stereo-systems capture three-dimensional information according to the principle of triangulation. This means that between the image planes of the two cameras and the object a triangle is constructed.

The stereo-vision is also used for self-driving cars to obtain 3D information about the environment.

Structured Light

Another way to obtain depth information is structured light. The basic principle of structured light is that light with specific structural features is projected onto the object by a near-infrared laser and then detected by a special infrared camera. The structured light is then converted into depth information by a computing unit to obtain the 3D structure by image processing. With the help of a source, usually an invisible infrared laser of a certain wavelength, the pattern is projected onto the object. From the projection onto the object an algorithm calculates the distortion of the returned coded pattern to determine the position and depth of the object.

Time of flight

The last technique we want to introduce in this article is the Time-of-flight technique. In time-of-flight (ToF), the distance is determined by directing a continuous pulse of laser light at a target and a sensor which receives the reflected light to determine the exact distance to the target by detecting the round-trip time of the light pulse. Because of the speed of light, it is not possible to measure the transit time directly, but by the phase shift of the light wave after it is modulated.

The ToF method can be divided, according to the modulation method, into two general categories: pulsed modulation and continuous wave modulation. Pulsed modulation is the direct measurement of the time of flight of light, hence the name direct ToF (dToF). LiDAR also uses dToF. The dTOF techniques provide 3D depth maps by applying a pulse of light (usually a laser) to a target and measuring the arrival time of the reflected pulse using an appropriate photodetector and supporting electronics. The range information is extracted from the acquired time data and the speed of light. In contrast, continuous wave modulation calculates the travel time of light based on phase differences, hence the name indirect ToF (iToF). The principle of iToF is that first the modulated light wave is transmitted, then the phase deviation of the signal is calculated by the proportionality of the energy values detected by the sensor in different time windows. After that, the time difference between the transmitted and the received signal is measured to indirectly determine the calculated distance.