Anonymization is a process which aims to modify the data such that individuals are not identified (and their identification - by means of correlation with other data sources for example – is not probable).

Current state of the art anonymization techniques are able to address the following risks associated with releasing data [1]:

- Singling out: isolating some or all records to identify an individual in a data set.

- Inference: deducing with high probability the value of an attribute.

- Linkability: linking records from possibly different data sets to establish they belong to the same individual(s). Note that linking presents a risk if it leads to Singling out or Inference.

Next, we will provide a high-level description of several anonymization approaches aimed at releasing data sets.

Randomization techniques alter the data such that the strong link between the individual and the data is removed - preventing inference.

Data perturbation, adding noise to the values directly, has been one of the many techniques addressing privacy since the mid 1970’s [2]. The idea here is to add a random noise (usually drawn from a normal or uniform distribution) to each value. In the work done by Agrawal et al. [3] the privacy is quantified by how closely an original value can be estimated. For example, if we can estimate the original value to fall in an interval with confidence , then ( ) - the interval width - defines the amount of privacy at confidence level. Essentially the privacy is measured by how difficult it is to estimate the original value from the perturbed value.

However, work done in [4] shows the privacy protection offered by adding random noise can be breached. They propose a method able to closely estimate the original values. The assumption here is that the adversary has the perturbed/disguised data set as well as the distribution of the random noise. His goal is to reconstruct the probability distribution of the original data. Similarly, the work in [5] shows that correlation between data attributes can be used to reconstruct the data more accurately and propose a solution which adds noise correlated similarly that will reduce the reconstruction accuracy.

Generalization techniques generalizes data attributes (e.g. birth date is generalized into a birth year) - preventing singling out.

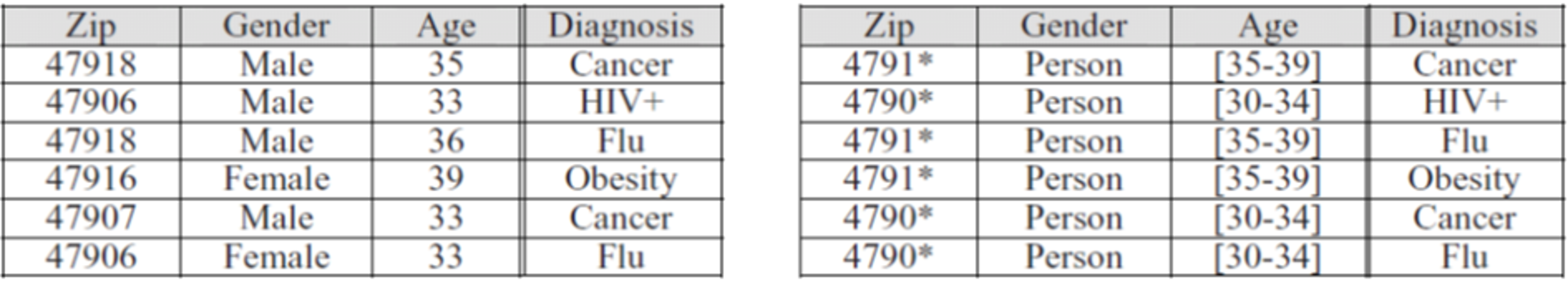

Figure 1 exemplifies the process of generalization of the zip, gender and age data attributes (in the left table). The resulting data set (right table) is k-anonymized (for zip, gender and age) where each patient can’t be distinguished from at least other 2 patients (k=3).

Figure 1 - Generalizing data attributes to achieve k-anonymization [6]

Generalization techniques, and particulary, aggregation and K-anonymity have been widely studied. K-anonymity algorithms such as Mondrian, Cluster based, Hilbert and R+-Tree based algorithms produces anonymized results while keeping enough information in the data set such that is still useful. As k-anonymization by itself does not prevent inference attacks, additional techniques are usually applied (on top of K-anonymity) to prevent identifying attributes values (e.g. L-diversity, T-closeness).

The goal of k-Crowd blending [7] is to improve the utility and performance of differential privacy and zero knowledge privacy while providing strong privacy guarantees. To achieve both differential privacy and zero knowledge privacy guarantees it relies on the assumption that the data set was collected via random sampling from some underlying distribution. Although this notion may be relaxed a bit – allowing for some bias and allowing the adversary to know if certain individuals were sampled or not – it is still required. k-Crowd blending can be achieved by the mechanism proposed by Li et al. [8]. This work, links k-anonymity and differential privacy and shows that “hiding in a crowd of k” can provide a strong privacy guarantee. They show that by adding a random sampling step before a “safe” k-anonymization algorithm can provide (ϵ, δ)- differential privacy.

Bibliography

[1] Article 29 Data Protection Working Party, independent European advisory body on data protection and privacy.Opinion 05/2014 on Anonymisation Techniques, Adopted on 10 April. 2014.

[2] T. Dalenius, "Toward a methodology for statistical disclosure control," 1977.

[3 R. Agrawal and R. Srikant, "Privacy-Preserving Data Mining," in ACM SIGMOD Conference on Management of Data, 2000.

[4] H. Kargupta, S. Datta, Q. Wang and K. Sivakumar, "On the Privacy Preserving Properties of Random Data Perturbation Techniques," in Proceedings of the Third IEEE International Conference on Data Mining, 2003.

[5] Z. Huang, W. Du and B. Chen, "Deriving Private Information from Randomized Data}," in Proceedings of the 2005 ACM SIGMOD International Conference on Management of Data, 2005.

[6] C.-C. Chiu and C.-Y. Tsai, "A k-Anonymity Clustering Method for Effective Data Privacy Preservation," in Advanced Data Mining and Applications, Springer, 2007, pp. 89-99.

[7] J. Gehrke, M. Hay, E. Lui and R. Pass, "Crowd-Blending Privacy," in Advances in Cryptology – CRYPTO 2012. Lecture Notes in Computer Science, 2012.

[8 L. Ninghui , Q. . H. Wahbeh and S. Dong , "Provably Private Data Anonymization: Or, k-Anonymity Meets Differential Privacy," CoRR, 2011.

Micha Moffie, IBM.