Diffusion Models are a type of likelihood-based models that have recently generated synthetic images of excellent quality.

Until recently, GANs were considered the state-of-the-art approach for image generation. While producing impressive results, they show some limitations: they are difficult to train and they capture less diversity than likelihood-based models.

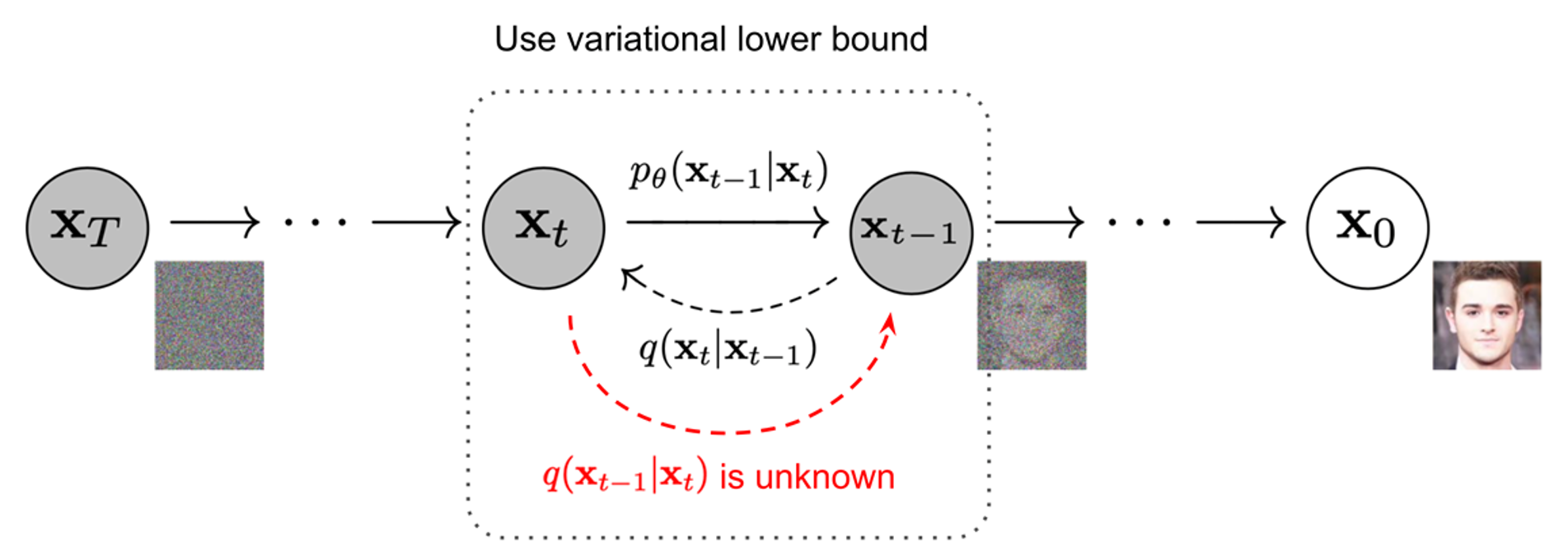

Diffusion models produce new samples by removing noise from a signal in a gradual manner. They are inspired by non-equilibrium thermodynamics. Diffusion models are setting a Markov chain of diffusion steps to add random noise to original data, followed by learning to undo it and construct data samples from noise.

Fig. 1 Graphical model architecture [2].

The interesting thing is that the noise adding process can be reverted and a sampling at a moment t can recover the true sample from a Gaussian noise input. The reverse of a diffusion process is also a diffusion process.

The advantages of the using Diffusion Models are: the higher coverage of the distribution of a dataset than GANs, while producing a higher quality level images [1]. A disadvantage is that sampling is a slow process as it is a sequence of denoising steps, but recent work proposed several techniques to speed up this process.

Gabriela Ghimpeteanu, Coronis Computing.

Bibliography

[1] Dhariwal, Prafulla and Nichol, Alex. Diffusion Models Beat GANs on Image Synthesis, doi = {10.48550/ARXIV.2105.05233}, 2021.

[2] Jonathan Ho, Ajay Jain and Pieter Abbeel, Denoising Diffusion Probabilistic Models, 2020, journal={arXiv preprint arxiv:2006.11239}