Privacy and Compliance for AI has been the topic of a few of our recent blog posts. It is a field that is well known and studied in the academic arena, but still relatively new in the industrial world.

People dealing with AI in real life often are not even aware of the special privacy aspects related to their models or may think that there is no good solution for preserving privacy in ML models. To this end we have created our first online course on AI Privacy and Compliance, freely and publicly available to anyone who wants to learn more about this topic.

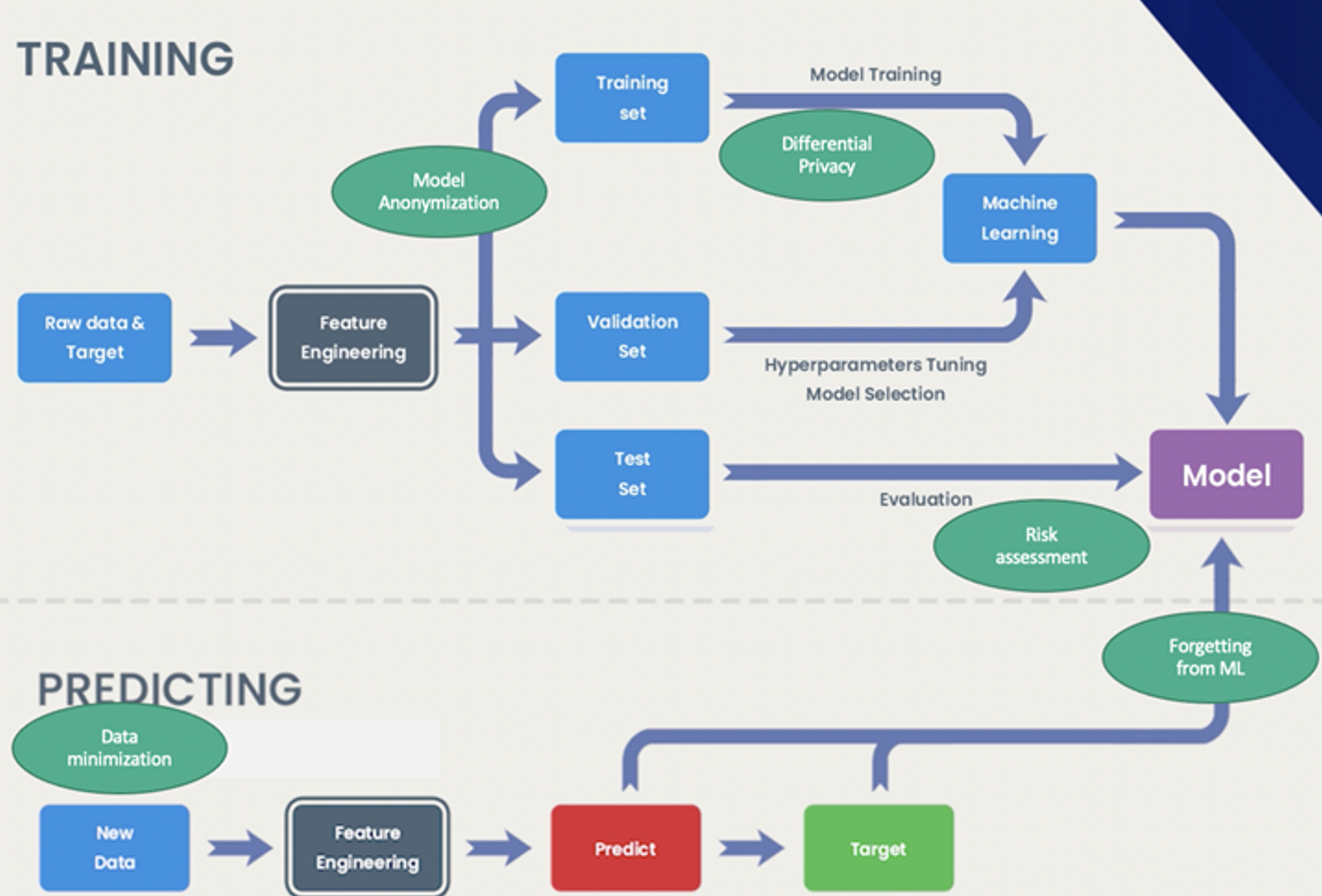

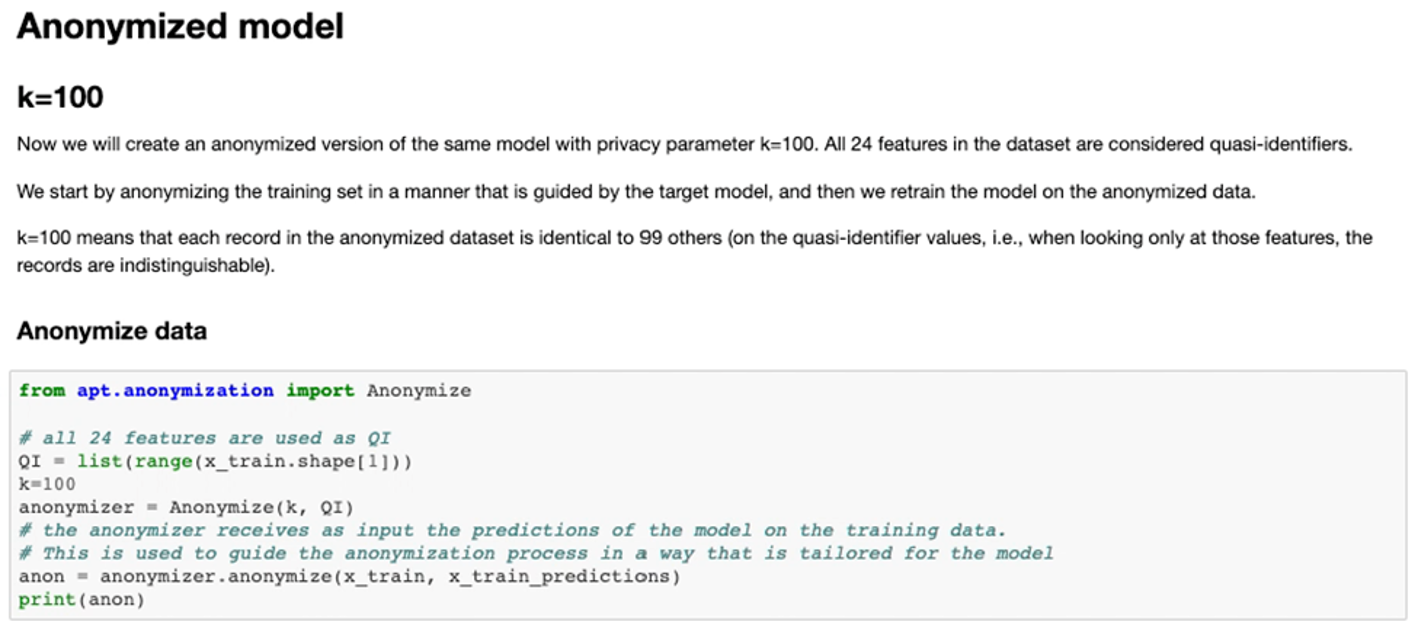

It puts AI Privacy in the wider context of trustworthy (or responsible) AI, and covers aspects such as what is privacy for AI models and why is it different from data privacy, privacy risk assessment of models, mitigating privacy risks using technologies such as model anonymization and differential privacy, and additional compliance aspects such as data minimization and the right to be forgotten.

It also covers some existing open-source toolkits and how to use them to assess existing models or create privacy-preserving models.

The course is designed for audiences including practicing Data Scientists, Machine Learning engineers, AI specialists and anyone with an interest in AI Trust and Privacy.

The course is designed for audiences including practicing Data Scientists, Machine Learning engineers, AI specialists and anyone with an interest in AI Trust and Privacy.

To take the course you only need a basic understanding of the machine learning workflow and knowledge of python, and it takes about four hours to complete. For more advanced users, we also provide guided notebooks and additional exercises that can be used to practice the learned material.

So if your work is related to AI models or machine learning involving information about people, or if you find this field interesting, please check out our course Accomplishing AI Privacy and Compliance with IBM Privacy Toolkits.