Today, digital medical imaging in the healthcare field has become a fundamental tool for medical diagnosis.

Privacy protection is a major requirement for the complete success of diagnosis systems, becoming even more critical in collaboration projects such as iToBoS, where data is shared among institutions and practitioners. While textual data can be easily de-identified, patient data in medical images implies a more elaborate approach.

The inappropriate use of personal data has driven the public debate on data protection to an unprecedented level, leading to the GDPR regulation in May 2018. According to Art. 4 of the GDPR, personal data is defined as: “[...] any information relating to an identified or identifiable natural person”. Considering that face and body parts are the most fundamental and highly visible elements of our identity, they fall under this definition, and consequently the GDPR.

Image anonymization is a crucial step in the iToBoS project to ensure data protection and privacy requirements.

Image Anonymization Methods

Anonymization is a processing operation which consists of using a set of techniques in such a way as to make it impossible, in practice, to identify the person by any means whatsoever and in an irreversible manner.

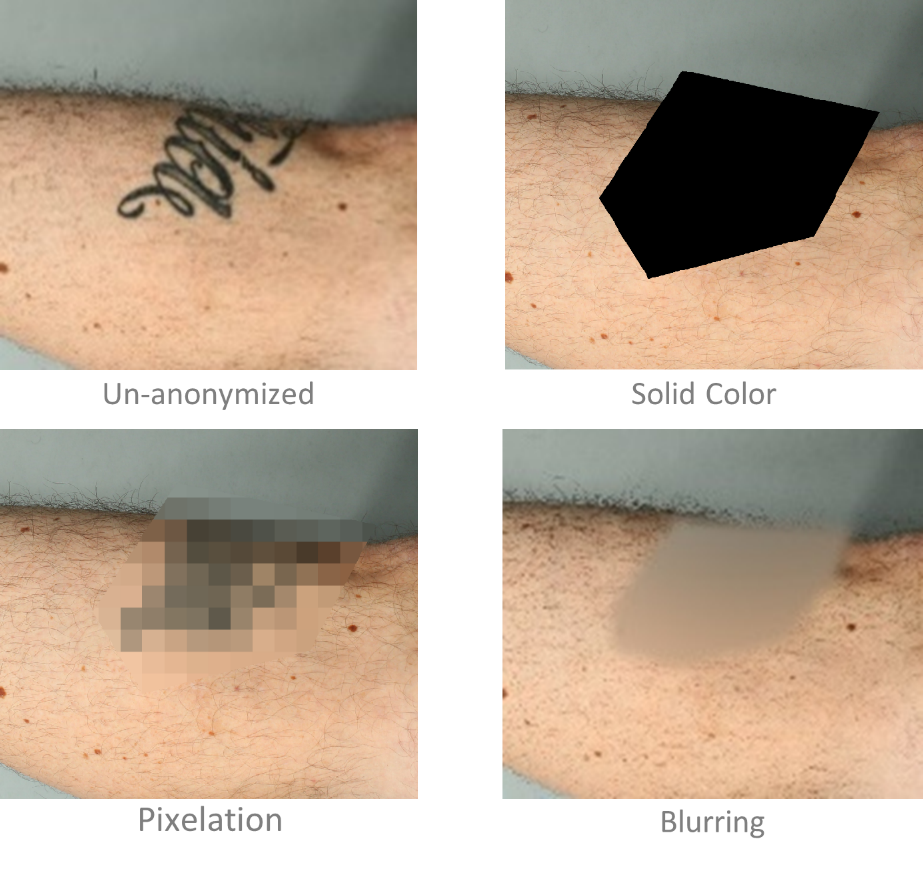

Traditional effective methods are:

- Replacing the identifiable area with a solid color.

- Pixelation i.e., reducing the resolution of the identifiable areas in the image.

- Blurring parts of the image to make it difficult to be identifiable.

After recent advancements in AI and deep learning, new techniques such as DeepPrivacy [1] and CLEANIR [2] based on the deep learning framework GAN (Generative Adversarial Network) are being developed as novel tools for image anonymizations. While traditional methods are still effective, computationlay inexpensive and widely used, these approches tends to change distribution of the image which makes it difficult for later computer vision tasks suchs as detection and tracking. One of the advantages of these recent techniques is that they can anonymise parts of the image such as faces in images automatically while the original data distribution of remains uninterrupted.

The overall model of DeepPrivacy. Source (DeepPrivacy [1])

References:

- Hukkelås, Håkon, Rudolf Mester, and Frank Lindseth. "Deepprivacy: A generative adversarial network for face anonymization." International symposium on visual computing. Springer, Cham, 2019.

- Maximov, Maxim, Ismail Elezi, and Laura Leal-Taixé. "Ciagan: Conditional identity anonymization generative adversarial networks."CVPR. 2020.

- Blog.ml6.eu.

- Medium.com.