We are all familiar with text search which returns document similar to our query. It is also possible to perform similar search but with images.

Image similarity search is a technique allowing to look for specific images sharing common features. It can be performed at two different levels depending on the objective:

- search for the exact same object in images.

- search for objects looking similar, but not necessarily exact same objects.

We can show some examples below:

How to apply this technique for iToBoS project?

When reconstructing the 3D avatar of a patient, one important step is to match the moles that are the same but taken by different cameras and thus present in several images (up to 10 images). By grouping them, we avoid inconsistency in diagnosis, and we also provide more information about one lesion with different images and points of view. We call this step “mole matching”.

What is the State Of The Art neural networks methods for mole matching?

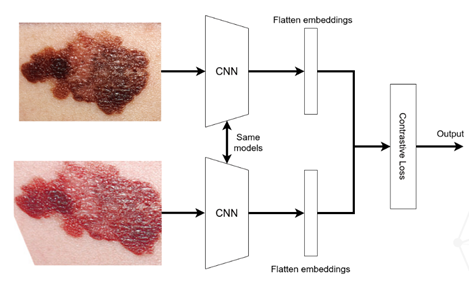

One method used for image similarity search is Siamese networks. Describing the algorithm, a pair of images will pass through two separate convolutional neural networks (CNN) sharing the same weights, then will be encoded into embedding vectors and the output will be a distance between the pair of vectors, calculated by the contrastive loss. Lower is the distance, higher is the similarity between the images. The role of this contrastive loss during the training of such algorithm is to increase the distance between a negative pair (not the same object) and decrease the distance for a positive pair (same object). Finally, for prediction, we need to fix a threshold to separate what the model predicts as positive pairs and negative pairs.