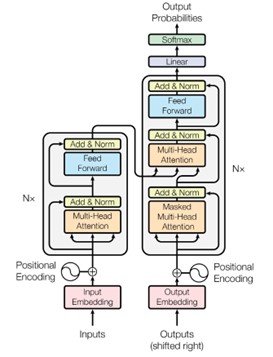

The paper ‘Attention Is All You Need’ introduces transformers and the sequence-to-sequence architecture.

This is a neural net that transforms a sequence of elements (for example, the sequence of words of a sentence) into another sequence, having the ability to model long-range dependencies without any convolutions (which are computationally expensive), using only the self-attention mechanism.