Transformers are deep learning architectures created to solve sequence-to-sequence tasks (such as language translation) and proposed in [1].

This architecture is extremely powerful and was applied in numerous applications such as BERT created to learn high-language representations, GPT-2/3 used for generative language modelling with a transformer that has only a decoder, AlphaFold2 applied to the protein folding task, DALLE-2 that produce impressive image generations using CLIP latents and diffusion.

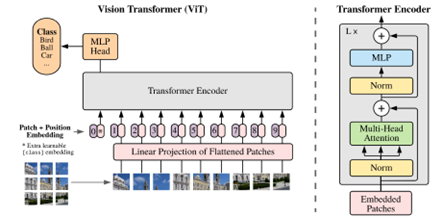

The extension of this architecture to computer vision tasks is called Vision Transformer was introduced in [2]. The idea is to process an image by dividing it in image patches and flatten each patch into a vector. Therefore, the image is represented as a sequence of vectors.

Fig. 1 Vision Transformer architecture [2] with the Transformer encoder used in NLP inspired by [1]

The flatten patches are mapped by a trainable linear projection to D dimensions, and the output is named Embedded Patches. All layers of the transformer are of constant latent vector size D. After projecting, positional embeddings are added to Embedded Patches and they represent the input of the Transformer Encoder.

This Encoder alternates layers of multiheaded self-attention and MLP. Self-attention mechanism is extremely efficient and allows Vision Transformer to transmit information globally, reaching the lowest layers. It works by computing inner products between all pairs of elements and computes attention scores for each of them. An example of the output of an attention token is illustrated in Fig. 2.

Fig. 2 Illustrative example of Attention output [2]

With multi-headed attention, the performance of attention layer is increased as there is focus on different positions, generating multiple representation subspaces in parallel and fusing their output into one.

One popular approach that works well for image classification is to pre-train Vision Transformers on large datasets followed by fine-tunning on smaller dataset for a specific task, at a higher resolution than pretraining.

Author: Gabriela Ghimpeteanu, Coronis Computing.

Bibliography

[1] Vaswani, Ashish, et al. “Attention is all you need.” Advances in neural information processing systems 30 (2017).

[2] Dosovitskiy, Alexey, et al. “An image is worth 16x16 words: Transformers for image recognition at scale.” arXiv preprint arXiv:2010.11929 (2020).