Deep neural networks (DNNs) are powerful tools for accurate predictions in various applications and have even shown to be superior to human experts in some domains, for instance for Melanoma detection.

However, as demonstrated in a previous post, they are susceptible to data artifacts present in the data. In mole classification tasks, the usage of several data artifacts has been detected, for example band-aids, rulers, and skin markers.

Model Correction with Explainable AI

Various model correction methods have been introduced to guide model behavior with expert knowledge during training. For example, Right for the Right Reasons (RRR) [1] aligns the model behavior with an explanation prior. Specifically, RRR leverages binary masks for undesired input features, e.g., data artifacts, and penalizes the use of features in that region, measured by the gradient during training. Alternatively, Contextual Decomposition Explanation Penalization (CDEP) [2] proposes to use CD importance scores for a feature subset based on the forward pass instead of gradient to align the model’s attention. Approaching model correction from another perspective, Class Artifact Compensation (ClArC) [3] models the artifact in latent space using Concept Activation Vectors. The model behavior with respect to the artifact is then unlearned either by finetuning the model and augmenting samples with the modeled artifact or by projecting out the artifact during test phase. We refer to our previous blog post on ClArC methods for additional details.

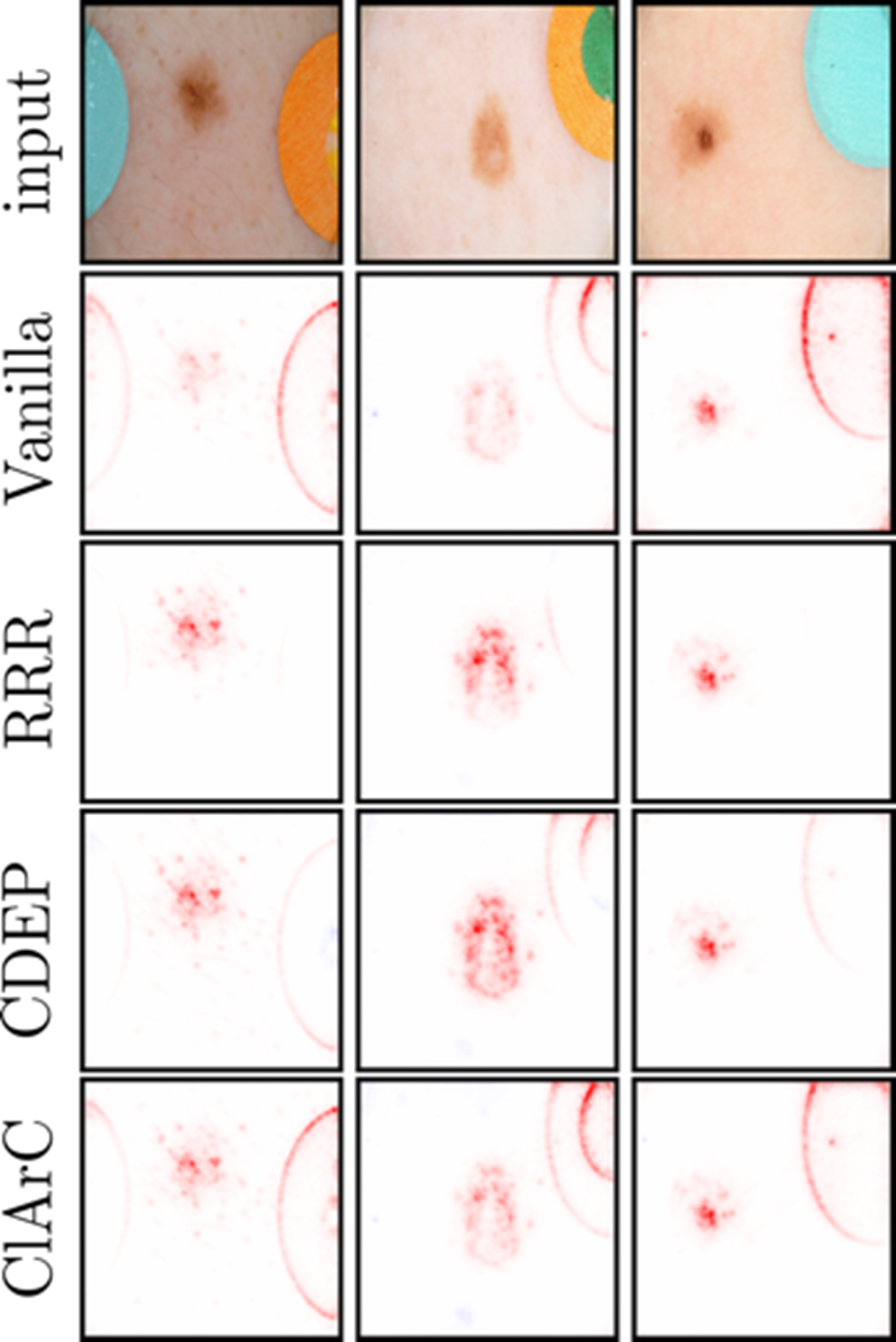

Figure 1: Explanations for the prediction of three samples containing the band-aid artifact using the original model (Vanilla) and corrected models with RRR, CDEP and ClArC. Regions highlighted in red are features used by the model for skin cancer prediction. Note that all correction method either increase the focus on the mole or decrease the focus on the band-aid artifact, in comparison with the Vanilla model.

Figure 1: Explanations for the prediction of three samples containing the band-aid artifact using the original model (Vanilla) and corrected models with RRR, CDEP and ClArC. Regions highlighted in red are features used by the model for skin cancer prediction. Note that all correction method either increase the focus on the mole or decrease the focus on the band-aid artifact, in comparison with the Vanilla model.

In Fig. 1, we show explanations for the original model (Vanilla) and corrected models using various correction approaches for samples containing the band-aid artifact using a VGG-16 model. The models corrected with RRR and CDEP have a reduced attention on the artifact regions. While ClArC does increase the relevance on the mole, it does not decrease the attention put onto the artifact. This is because ClArC does not actively penalize the usage of artifacts, but instead encourages the model to find alternative prediction strategies. The results are obtained from our recent paper on iterative XAI-based model improvement [4].

Conclusions

DNNs may use spurious features present in the data, which causes risks when deploying these models in practice. In this post, we have summarized and applied three XAI-based model correction approaches (RRR, CDEP and ClArC) to unlearn undesired model behavior.

Relevance to iToBoS

In iToBoS, many different AI systems will be trained for specific tasks, which in combination will culminate in an “AI Cognitive Assistant”. All those systems will need to be explained with suitable XAI approaches to elucidate all possible and required aspects of the systems’ decision making. Throughout the iToBoS project, we must detect and avoid the usage of data artifacts for model predictions.

Authors

Frederik Pahde, Fraunhofer Heinrich-Hertz-Institute; Maximilian Dreyer, Fraunhofer Heinrich-Hertz-Institute; Sebastian Lapuschkin, Fraunhofer Heinrich-Hertz-Institute

References

[1] Ross, A. S., Hughes, M. C., & Doshi-Velez, F. (2017). Right for the right reasons: Training differentiable models by constraining their explanations. arXiv preprint arXiv:1703.03717.

[2] Rieger, L., Singh, C., Murdoch, W., & Yu, B. (2020, November). Interpretations are useful: penalizing explanations to align neural networks with prior knowledge. In International conference on machine learning (pp. 8116-8126). PMLR.

[3] Anders, C. J., Weber, L., Neumann, D., Samek, W., Müller, K. R., & Lapuschkin, S. (2022). Finding and removing Clever Hans: using explanation methods to debug and improve deep models. Information Fusion, 77, 261-295.

[4] Pahde, F., Dreyer, M., Samek, W., & Lapuschkin, S. (2023). Reveal to Revise: An Explainable AI Life Cycle for Iterative Bias Correction of Deep Models. arXiv preprint arXiv:2303.12641.